Measurement Systems Analysis (MSA) is a mystery for many, but it doesn’t need to be. Let’s start with a simple definition: MSA is an experiment that assesses the trustworthiness of your data collection efforts. The experiment tests for accuracy, understanding of Operational Definitions as well as whether different data collectors are doing things the same way. The goal of MSA is to reduce defects and variation within the data collection process itself.

MSA is often viewed as a statistically-based “recipe” that fits many situations. When that recipe works for your project it’s fairly easy to apply—but when it doesn’t fit, then what?

A Matter of Trust

It’s not about trusting yourself or the person collecting the data—it’s about trusting what the data is telling you. Two commonly used MSA methods are:

Crossed Gage Repeatability & Reproducibility (Crossed Gage R&R)

This is used to test measurements of continuous data by comparing results from repeated trials of multiple people

- Example: A Project Leader needed to measure the thickness of a metal disc using a micrometer, which is subject to some operator variation. For their MSA, they measured ten discs ranging in thickness across the specification range and recorded the precise thickness. They randomly presented the discs to three different people who recorded their measurements. They repeated the measurements until each person had measured each piece three times. The standard Crossed Gage R&R template works well for analyzing this type of data

Attribute Agreement Analysis

This is used to test attribute (discrete) evaluations by comparing repeated judgments of multiple people

- Example: A Project Leader needed to evaluate the paint quality for a kitchen appliance. For the MSA, they selected thirty units which ranged from perfect quality to clearly unacceptable.They randomly presented each unit twice to two appraisers who rated each unit as pass or fail. They repeated the assessment until each appraiser evaluated each unit twice. The attribute agreement template works well for analyzing this type of data.

A common recipe for the Crossed Gage R&R uses ten parts and three people resulting in three measurements each. If that works for you, great! If not, it can be adapted. If your project does not measure parts, work with what you do measure. If you can’t get 10 samples, use what you can get. Both Minitab and SigmaXL allow this with two samples and two people. Of course it is better with more, but you have to work with what you’ve got. Both Minitab and SixmaXL provide similar, scalable templates for attribute agreement analysis with varying numbers of units, appraisers and evaluations.

1. But I’m All Alone

Reproducibility is especially important if there are multiple people gathering data since it’s measuring whether different people get the same results when measuring the same unit. But if you are the only one collecting data then you can simply use a copy of your measurements for the “other” person. You can ignore the results for reproducibility (it will show no variation anyway). As long as the repeatability error, whether you get the same results every time, is small (<10%), you’re in great shape!

2. But I Know My Watch Is Accurate

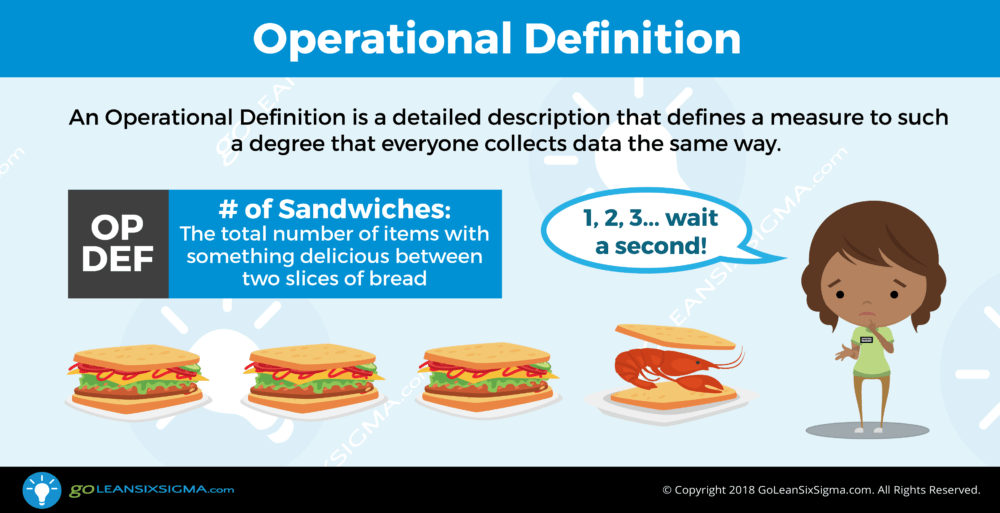

Many projects aim to reduce cycle time, which is measured with clocks (okay, iPhones). Almost all clocks are more than accurate enough for cycle time reduction projects—but what about taking the readings? This is where crystal clear Operational Definitions are critical. We need to be very specific about when the process starts and when it ends. If the process starts when the customer arrives, exactly when do we recognize arrival:

- When we see them drive in?

- When they walk through the door?

- When they get into line?

- When they arrive at our desk?

- When the greet us or ask for help?

There is no right choice—any of these will do—but it’s important that everyone gathering data is doing it the same way. In addition, everyone should be recording the time with the same precision. If you don’t nail this down, some may record minutes and seconds while others only minutes, and some may round to the nearest five minutes. If you don’t specify, your data collectors will decide for themselves.

3. My Data Is Collected Automatically

If you can access automatic data collection, this can certainly make data collection easier, but how do you know it’s truly accurate? In order to test automatically collected data, you’ll need to learn how the measurement is actually made and try to duplicate it manually for ten or more measurements. If you obtain essentially the same results, you’re in business!

4. It’s in the Computer!

There was a time when many believed information in computers was guaranteed to be true. We know this isn’t so, but many still act as if it were. Errors happen because:

- Data can be entered by human beings, and we are fallible. There are opportunities to mis-read, mis-key, or enter data in the wrong fields.

- We don’t always understand what we are looking at. Databases often use abbreviated names for data fields which can easily be misunderstood.

- Database queries may not be fully debugged. They may not be providing the information we expected.

There isn’t much we can do about past data entry errors, but we can address the other two. A database should have a data dictionary, which describes precisely what’s contained in each data field. Sadly, this documentation detail is sometimes overlooked. If you don’t have a data dictionary, you may need to confer with your IT professional to find out what the data really means. Don’t accept a “hand waving” answer. If they can’t tell you exactly how the data got there, they may not be digging enough to know what the data really means.

Simple databases are essentially flat files—like a big Excel spreadsheet. These are easy to use. Other databases, especially those in Enterprise Resource Planning (ERP) systems are relational—essentially a series of tables linked together.

If you need data that isn’t available from an existing report, a Structured Query Language or SQL (pronounced see-kwil) query may need to be written. These can be tricky and may not work as desired the first time, so it’s important to check the data for reasonability. Here are some ways to do that:

- Confirm the data range: If you specified a date range or other limits on the data, scan your data to confirm that it contains what you expected.

- Look for anomalies: If there is obviously incorrect data—negative numbers that don’t belong, values that are unrealistic, etc.—then there’s an issue

- Check good entries: If possible, select ten lines of good data at random and compare to source documents. These may be papers, or another electronic form.

If you are drawing records of subjective evaluations from a database, it is important to confirm that the evaluation method used to appraise the units is adequate (probably using attribute agreement analysis)

5. Measurement Destroys My Parts!

This can be a tough one. If your test destroys what you are measuring, you clearly cannot repeat the test. Testing material strength by pulling a sample until it breaks is an example of destructive measurement. Here are a couple of work-arounds:

- Create identical (or nearly so) samples and measure them.

- If possible, divide the sample into several pieces and then measure

- If possible, identify a non-destructive characteristic that is known to correlate to the destructive measure.

If there is not a viable solution, then it’s one of the few situations where MSA may not be feasible.

6. Measures Are Done Outside by a Trusted Source

Customer Satisfaction data is often provided by outside firms like Press Ganey or J.D. Powers. If the source you use has been independently validated, the data can be used without an MSA. Be sure to ask your provider to advise you of the margin of error associated with the data.

Call to Action

Prior to writing this, I reviewed 28 Black Belt Storyboards and found that only 50% included an MSA. While some gave plausible reasons, most offered weak excuses. That’s a lost opportunity and potentially detracts from the quality of the decisions that were based on the data.